A Practical Framework for Using AI in Land Record Indexing (Without Compromising Accuracy)

Artificial intelligence is increasingly part of conversations about modernizing government recordkeeping. For land record offices facing staff constraints and growing volumes, AI-powered indexing tools can look like a practical way to move faster.

But land records are different. These documents establish legal rights, support taxation, and serve as authoritative public records. Even minor indexing errors can create significant legal and operational risk. That’s why applying AI for land records requires more caution than “set-it-and-forget-it” automation allows.

AI can assist with extraction and classification, but it cannot replace human judgment. In practice, full automation without review introduces unacceptable accuracy and compliance risks.

This article outlines a practical, compliance-first framework for using AI responsibly in land record indexing—one that blends automation with structured human oversight. It’s designed to help clerks and recorders evaluate AI tools realistically, challenge AI-only claims, and move forward with confidence.

What Does “Human-in-the-Loop” Mean in Land Record Indexing?

At its core, a human-in-the-loop approach means AI supports the work, but people remain responsible for accuracy. In land record indexing, this model is essential for maintaining trust and legal reliability.

A Plain-Language Definition

Human-in-the-loop AI records workflows use AI to extract and suggest indexing data, while trained reviewers validate every critical field before records are finalized. AI accelerates routine tasks; humans ensure correctness.

This is very different from AI-only indexing, where extracted data flows directly into the system without meaningful review. For land records, that level of automation creates risk rather than efficiency.

How Human-in-the-Loop Indexing Works in Practice

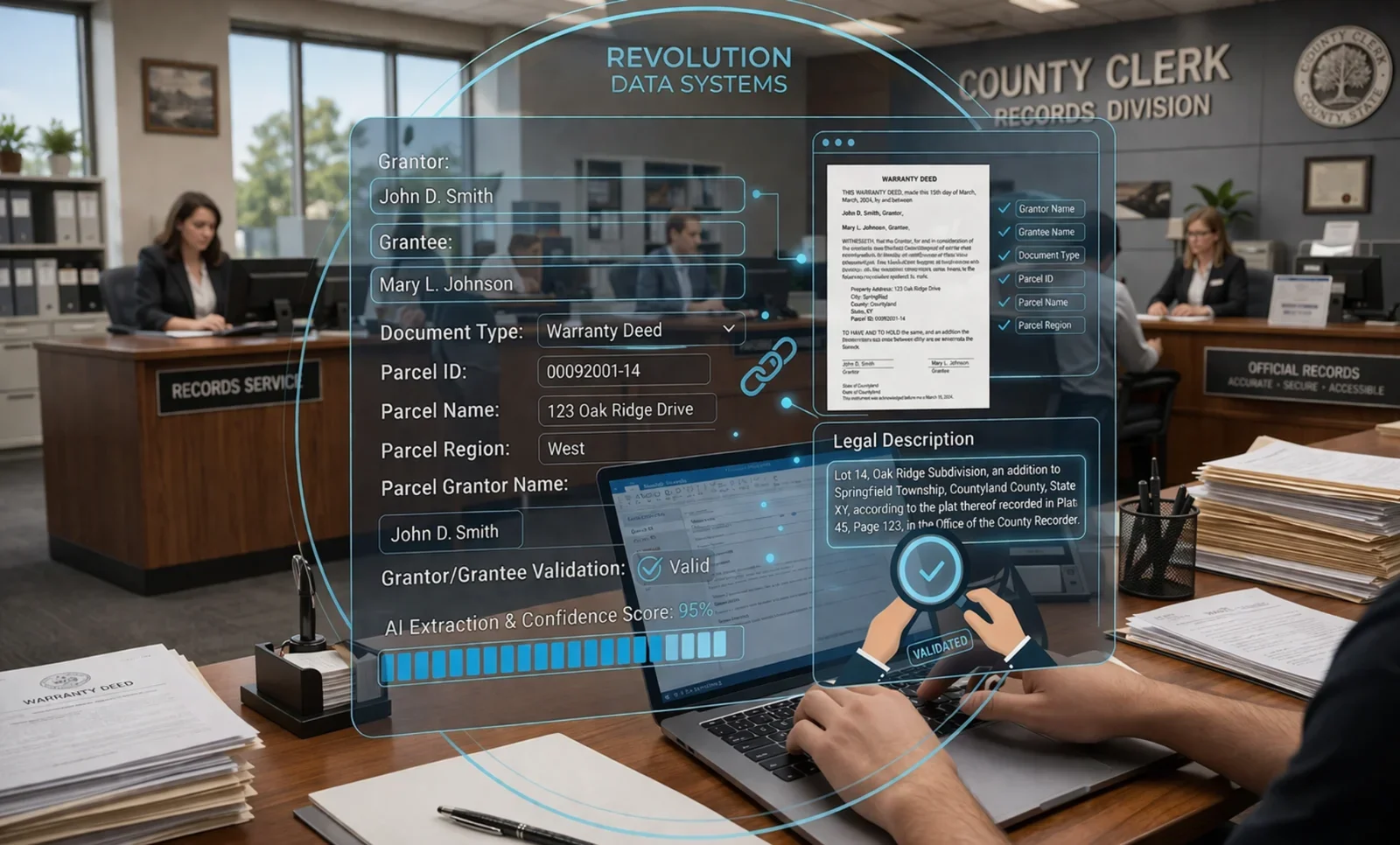

In AI-assisted land records workflows, AI typically performs an initial pass to identify document types and extract names, dates, and parcel references. The system may also assign confidence scores to each field.

Human reviewers then step in to confirm or correct the data before it is added to the official index. This process is a core part of a defensible land record verification process, not an optional extra.

Where Human Judgment Matters Most

Certain elements consistently require human review, including:

Grantor and grantee name accuracy

Document type classification

Legal description interpretation

Cross-checking parcel references

By combining AI speed with human accountability, offices reduce error rates while preserving land records data accuracy.

Where Do AI Indexing Errors Most Commonly Occur in Land Records?

AI performs well with clean, standardized documents. Land records rarely meet that standard. Errors tend to appear in predictable areas, often where formatting, handwriting, or legal nuance is involved.

Common Error-Prone Areas

Grantor/grantee fields: Similar names, suffixes, trusts, and estate language are frequently misread or mismatched.

Legal descriptions: Unconventional formatting, abbreviations, or multi-parcel descriptions challenge automated extraction.

Handwritten or marginal notes: AI struggles with annotations, corrections, and older records that include handwritten elements.

Mixed document batches: Deeds, mortgages, releases, and assignments grouped together can lead to incorrect document type classification.

Parcel and reference numbers: Inconsistent numbering formats or embedded references are often misinterpreted.

Why These Errors Matter

When AI errors go uncorrected, they can result in misindexed or unretrievable records, ownership disputes, and long-term data integrity issues. This is why AI document indexing with human review is not a convenience safeguard—it is a requirement for reliable public records.

How Do Human Reviewers Validate AI-Extracted Land Record Data?

Human review turns AI output into legally reliable records. The goal is not to re-key documents but to confirm that AI-extracted data meets required accuracy standards.

A Practical Verification Workflow

AI-assisted extraction: AI identifies key fields, including names, document types, dates, and parcel references.

Field confidence scoring: Low-confidence or ambiguous fields are flagged for attention.

Human validation of core fields: Reviewers verify grantor/grantee names, legal descriptions, document type, and parcel identifiers.

Secondary quality checks: A second review ensures compliance with office standards and internal controls.

This land record verification process blends efficiency with accountability. AI accelerates indexing, while human reviewers apply judgment where it matters most—providing the public records AI oversight needed to protect accuracy and trust.

What Happens When AI Indexing Gets It Wrong?

Indexing errors are not just technical issues. They create real legal and operational consequences for land record offices.

Downstream Impacts of Errors

Misindexed or unretrievable documents: Records that cannot be reliably found undermine public access and staff efficiency.

Ownership and lien disputes: Incorrect names or legal descriptions can affect title research and property rights.

Public portal inaccuracies: Errors propagate across systems, damaging confidence in official records.

Costly corrections: Fixing errors after release requires staff time, reprocessing, and sometimes legal review.

These risks highlight why speed alone cannot drive indexing decisions. AI quality control for land records depends on structured human oversight to catch issues before they become liabilities.

Where Human Review Is Required in AI-Assisted Indexing

Even with advanced tools, certain indexing decisions require human judgment. This is where human-in-the-loop AI records provide essential safeguards.

Critical Review Points

Grantor and grantee name validation: Confirm spelling, order, suffixes, and entity types.

Legal description confirmation: Verify completeness, parcel count, and formatting accuracy.

Parcel and reference accuracy: Check parcel IDs, book/page references, and cross-links.

Document type classification: Distinguish between similar instruments such as deeds, releases, and assignments.

Exception handling: Address poor scans, duplicate filings, or unusual documents.

Low-confidence field review: Validate fields flagged by AI for ambiguity or uncertainty.

This structured review ensures AI-assisted land records meet required accuracy standards while maintaining accountability.

What Governance Controls Should Exist for AI-Assisted Indexing Projects?

Responsible AI use in land records requires more than good technology—it requires clear governance. Offices should approach AI-assisted indexing with the same risk-management mindset applied to other core recordkeeping functions.

Essential Governance Controls

Defined accuracy standards: Core fields such as grantor/grantee, document type, and legal description should meet strict accuracy thresholds before acceptance.

Audit trails and accountability: Maintain logs showing AI-suggested data, human edits, and final approvals to support transparency and compliance.

Mandatory human QA triggers: Require review for low-confidence fields, complex documents, or non-standard formats.

Clear roles and responsibilities: Ensure it is always clear who is accountable for the final indexed data, not the software.

Vendor transparency: Vendors should clearly explain how AI models work, where human review occurs, and how errors are handled.

These controls reinforce public records AI oversight and help offices deploy AI for land records without compromising legal defensibility.

A Practical Human-in-the-Loop Workflow for Land Record Indexing

A clear workflow helps land record offices see how AI and human oversight work together without adding unnecessary complexity. This structure prioritizes accuracy while still improving throughput.

Step-by-Step Workflow

AI-assisted extraction: AI proposes values for names, document type, dates, legal descriptions, and parcel references.

Confidence scoring and flagging: Fields with ambiguity, low confidence, or non-standard formatting are automatically flagged.

Human validation and correction: Reviewers confirm or correct all critical fields, applying judgment where AI falls short. This is the core of AI document indexing with human review.

Secondary QA review: A quality check ensures consistency with office standards and compliance requirements.

Clerk review and acceptance: Final approval remains with the office, reinforcing accountability.

Indexing finalized and stored: Verified data is committed to the official system of record.

This workflow illustrates how human-in-the-loop AI records preserve control, accuracy, and trust while still enabling AI-assisted efficiency.

How to Move Forward with AI—Without Compromising Land Record Integrity

AI can play a meaningful role in modern land record indexing, but only when accuracy, accountability, and compliance remain non-negotiable. For offices responsible for legally binding public records, fully automated indexing is neither safe nor defensible.

A human-in-the-loop model allows offices to benefit from automation while preserving control over critical decisions. By combining AI-assisted extraction with structured human validation, recorders and clerks can reduce backlogs without sacrificing land records data accuracy or public trust.

When assessing AI for land records, it's crucial to go beyond marketing claims and concentrate on the detection, correction, and prevention of errors. Technology should support your statutory responsibilities, not shift risk onto your office.

Revolution Data Systems (RDS) works with land record offices to implement AI responsibly, with governance controls and human oversight built in from the start. Talk with an RDS expert about responsible, human-verified AI-assisted indexing for your land records.

Frequently Asked Questions About Using AI for Land Record Indexing

What role should humans play in AI-assisted land record indexing?

Humans must validate all legally significant indexing fields before records are finalized. In AI-assisted land records, AI supports data extraction, but trained reviewers confirm accuracy to ensure compliance and trust.

How are AI indexing errors detected and corrected?

Errors are identified through confidence scoring, field flagging, and structured human review. This land record verification process ensures that issues are corrected before records are added to the official index.

Who is accountable when AI-assisted indexing is wrong?

The recording office remains accountable. Public records AI oversight requires clear responsibility, audit trails, and documented human approval—AI does not assume legal liability.

How much human review is enough for land records?

Human review is required for all core fields, including names, document type, legal descriptions, and parcel references. This level of AI quality control for land records is necessary to meet accuracy standards.

How does RDS structure human verification into indexing projects?

RDS embeds human-in-the-loop AI records by requiring human validation, secondary QA, and full auditability for every indexing project. We balance efficiency with legal-grade accuracy